GIS, Demographics, and Data Science Consulting

WALKER DATA is a data science consultancy specializing in geospatial and demographic data support for your business.

Please reach out to kyle@walker-data.com for consulting assistance with the following topics:

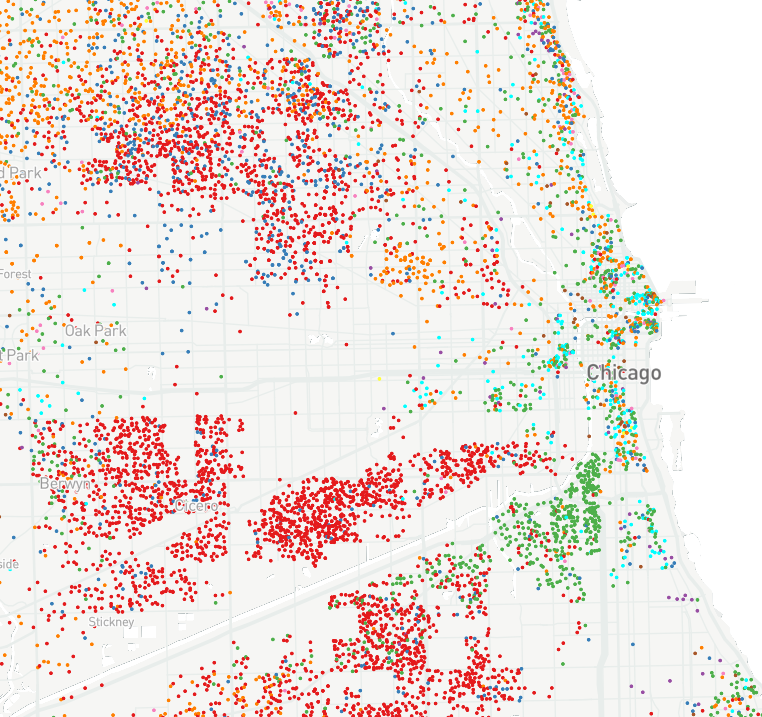

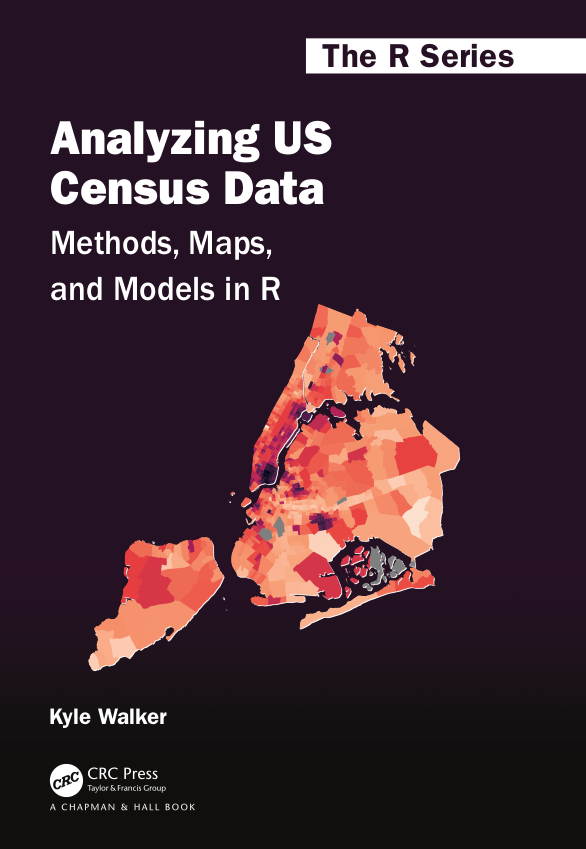

- Demographic analysis / Census data

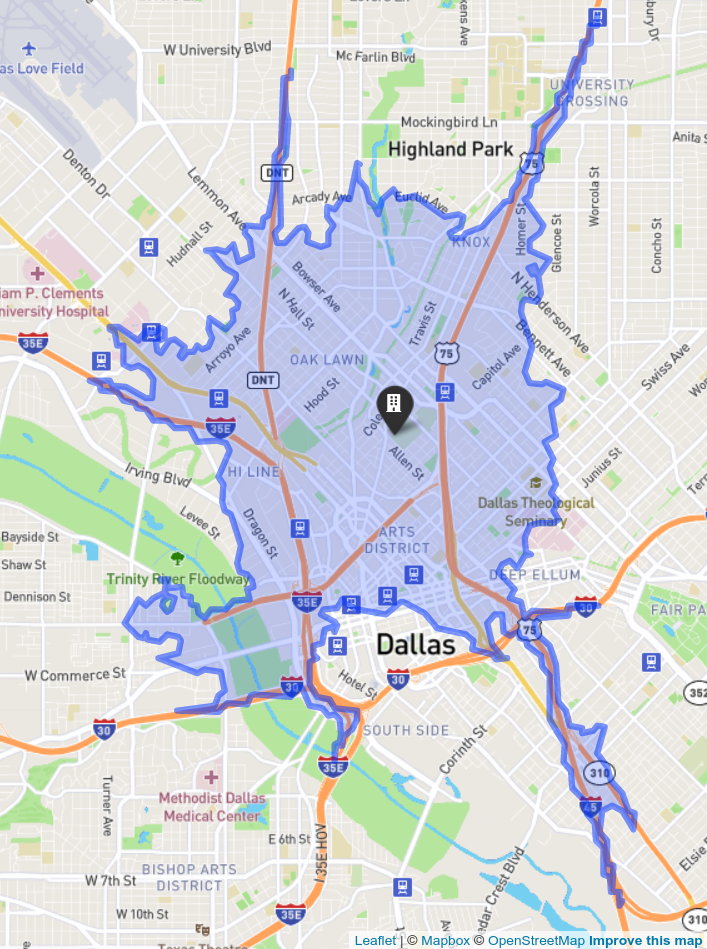

- Business and location intelligence

- Geographic Information Systems (GIS) and spatial data science

- Custom data science workshops, including training in the R and Python programming languages

To receive on-going updates from Walker Data, consider signing up for the Walker Data mailing list: